AR (Augmented Reality) has been experimented with for almost a decade. I recall in 2013 working over the summer at a app-development company that was playing with the idea of using smartphone cameras to overlay an animated character overtop a QR-code trading card. We’ve seen games like “Pokémon Go” in 2016 giving us cute little monsters seemingly in the same space as us. The applications have been simple so far, relying on simple overlay of CGI, but I suspect we will see greater innovation and growth in this field in the next 3-5 years. This is because both Apple and Google are starting to heavily promote the applications.

It’s crazy to think how quickly the market is adapting to change. VR (Virtual Reality) was the big buzz word throughout 2015 and 2016. Even now, companies are preparing releases for headset hardware to mark their first step in the market. This technology was good enough to make us feel like we were in a different world, the gyro-sensors were on point, and the headset displays were reaching clarity even at an inch from an eyeball.

Phone makers, namely Samsung, were cornering the market in their own way, allowing you to use your (premium) smartphone to get the same experience without shelling out for a PC-solution. I’ve tried an Oculus Rift, PSVR, and a Samsung Gear, the differences for general viewing are small enough for any of them to impress. Personally, I still never liked the idea of paying nearly $1,000 for a phone to call people (I still opt for the budget-selections at under $200 CDN where possible), and even now, only higher-end phone models actually have the gyroscope and accelerometer sensors required to use any VR/AR application. I didn’t like the PC options much either: I didn’t understand why minimum PC requirements called for $1000 hardware when my desktop PC at 1/2 the price was able to work just fine with a headset, and why consumer-headsets alone were more expensive then said-PC in my office (although they have since dropped in price as competition sped up).

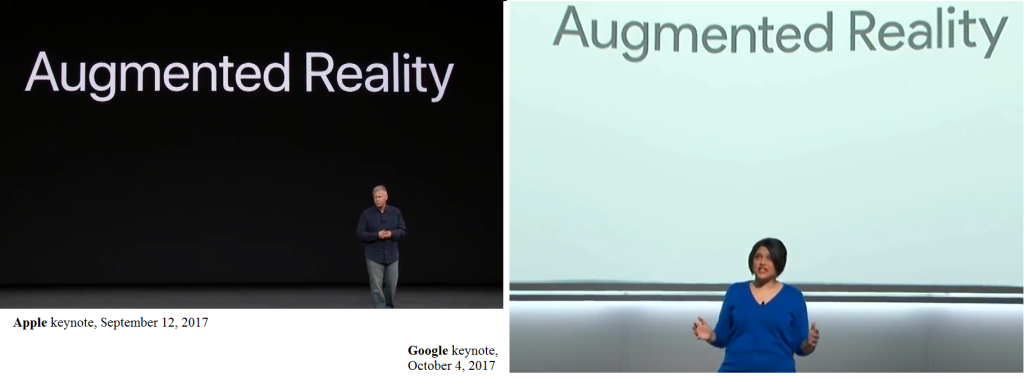

Even now, nearly two years later, while VR clearly made a mark, it didn’t shatter everything like tech-enthusiasts hoped. Costs are too high, applications too limited, and those headsets still too big and goofy with their cords running down your back. And the promise of AR loomed on the horizon… it would require less computation, display information alongside the real world around us, and (presumably) be available in a portable, less bulky fashion. Maybe this is why Apple and Google, on September 12 and October 4 2017 respectively, made “Augmented Reality” a core part of what their latest devices could do.

On the 10th anniversary of the original iPhone, Apple announced the iPhone X (and also the iPhone 8, a phone obsolete seconds after its public reveal with the X). Along with it, it declared official support from iOS to make AR applications (… this wasn’t done yet?!??!), and showed off some examples. Yes, you can now play games with fantasy worlds sprawled on your kitchen table. You can also see stats on top of football players during a live game, or see constellations explained to you as you drag your phone along the real night sky. When Google announced the Pixel 2 phone, they showed similar but more playful applications, like the ability to put live face-paint masks on you, or the ability to use new AR “stickers” to place 3D-animated cartoons alongside you.

The thing is, none of these applications are entirely new as of September 2017. They’ve existed for years now. But they’ve improved up to today. And other existing technologies, like Google Translate, face-mapping, and voice-recognition, also have been around, but most people have not yet realized or bothered to use them in their daily lives. These technology giants (and game companies too) have gotten really good at promoting the latest tech during their press conferences to get us excited. Science-fiction concepts are becoming real, and are available at your local store for only $80 a month.

Google in particular excited me. One of their AR “sticker” options was to plop cartoon versions of “Stranger Things” characters, based on the live-action hit television show. You could put them next to you, and they would “react” to you… well, it didn’t really look like that, it was more that the character was just running on a pre-animated loop while arbitrarily facing the phone. But then you could put a SECOND character next to it, and they would react to each other. Again, this was pre-animated, but it was the start of these characters feeling alive.

Google also had examples of different voices for your Google Home device, including voices from Disney and DC characters. It’s unusual to see Google be the one to chase after third-party brands, but it was used to good effect. And, I assume, much of those voice clips will be generated and not pre-recorded. I still firmly believe the time will come when voice acting will be replaced by computers utilizing old recordings to make new sentences, where actors might be compensated for use of their likeness.

From these demos of AR, I suspect the more practical uses will be utilized first. The game demos are still stuck with overlaying graphics and not really interacting with people in the frame or the room in which they stand in a meaningful way, but I hope this will change soon. I still don’t like the idea of paying more for a phone than a laptop to get access to this technology, but with it (and also the Microsoft Hololens hardware, which is limited but does work from what I’ve tried), I think we are slowly reaching a point where, eventually, everyone will be using it, the same as how everyone has “a” smartphone today.

Specifically, I want to see virtual characters that we can see, that can talk to us, that we can talk to. The example in recent blockbuster “Blade Runner 2049” is a little extreme, but I think for productive and emotional support, to have a Siri or Cortana with a visible presence and a personality can reshape a lot about how we live our lives. We did not see that yet this year, but all the pieces are there. We can overlay computer characters. We can recognize humans in a live picture, and their expression. We can recognize obstacles in a space. We can play voice from any given sentence. We can have programs learn over time. It’s all there, and can be done from a computer that fits in your pocket… but why haven’t we reached the point to have these things come together?

Further, we are restricted to only computer-animated characters. Live-action and 2D are out of the question… except it’s not. At a recent talk at the University of Michigan, I saw a sneak peak at prototype technology from Microsoft (the video is online if you search for it) that showed the ability to record human presence from multiple cameras, to record the 3D shape and map live textures. The result is a surprisingly real AR version of a live (or recorded) person that you could walk around. The idea was that this would allow real-time meetings from anywhere I the world (the demo showed an employee playing with his daughter in a different city), or to record “memories” to view later. So then, live-action in AR can and will come. All that’s left is having 2D characters… AND I’M THE ONE GUY IN THE WORLD MAKING THAT HAPPEN! Cool!

It can be frustrating to see technology move so fast and yet so slow. The upside is that it gives me time. I’ve always thought I was born just a few years too late to take advantage of new ideas and technologies in the right away, and I always seem behind even now. A quiet me during the last few weeks fist-pumped the air in celebration, seeing that I am still on top of something no one seems to even be thinking of yet. One day, either through my developing “3D Cel Animation” techniques or some other method not yet seen, I want to have Jiminy Cricket singing to comfort me on my deathbed, every bit like how I saw him in the Disney movie and not a bit less. It will happen… my only fear now is that I might be the only person who is crazy enough to want such a thing.